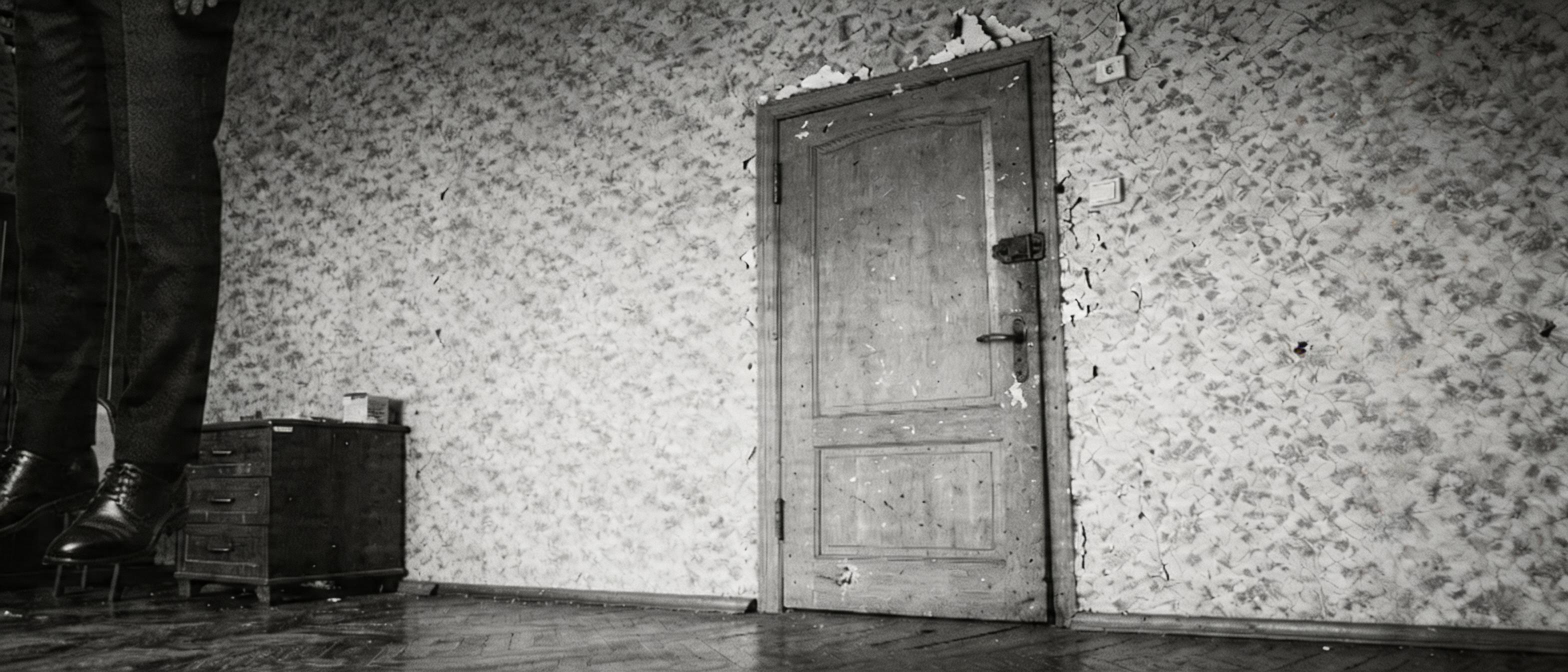

Evening — back to the Hitchcock plan: hanging legs on the left, closed door on the right. Task: build a "door + legs" mockup, run it through Seedream, get the final shot1_door_with_foot frame.

The user noted in the morning that the legs in the previous draft were small:

"Legs really small, you can see for yourself."

Turned out shot1_door_closed.png was actually 892×382 — the agent had mixed up the numbers and built the mockup at the wrong scale. Redid it: cropped only legs and shoes from body_ref.png, no floor.

Built the mockup: hanging body on the left, door on the right, torso going off-frame upward. The seam around the legs is visible — that's a feature, layout instruction.

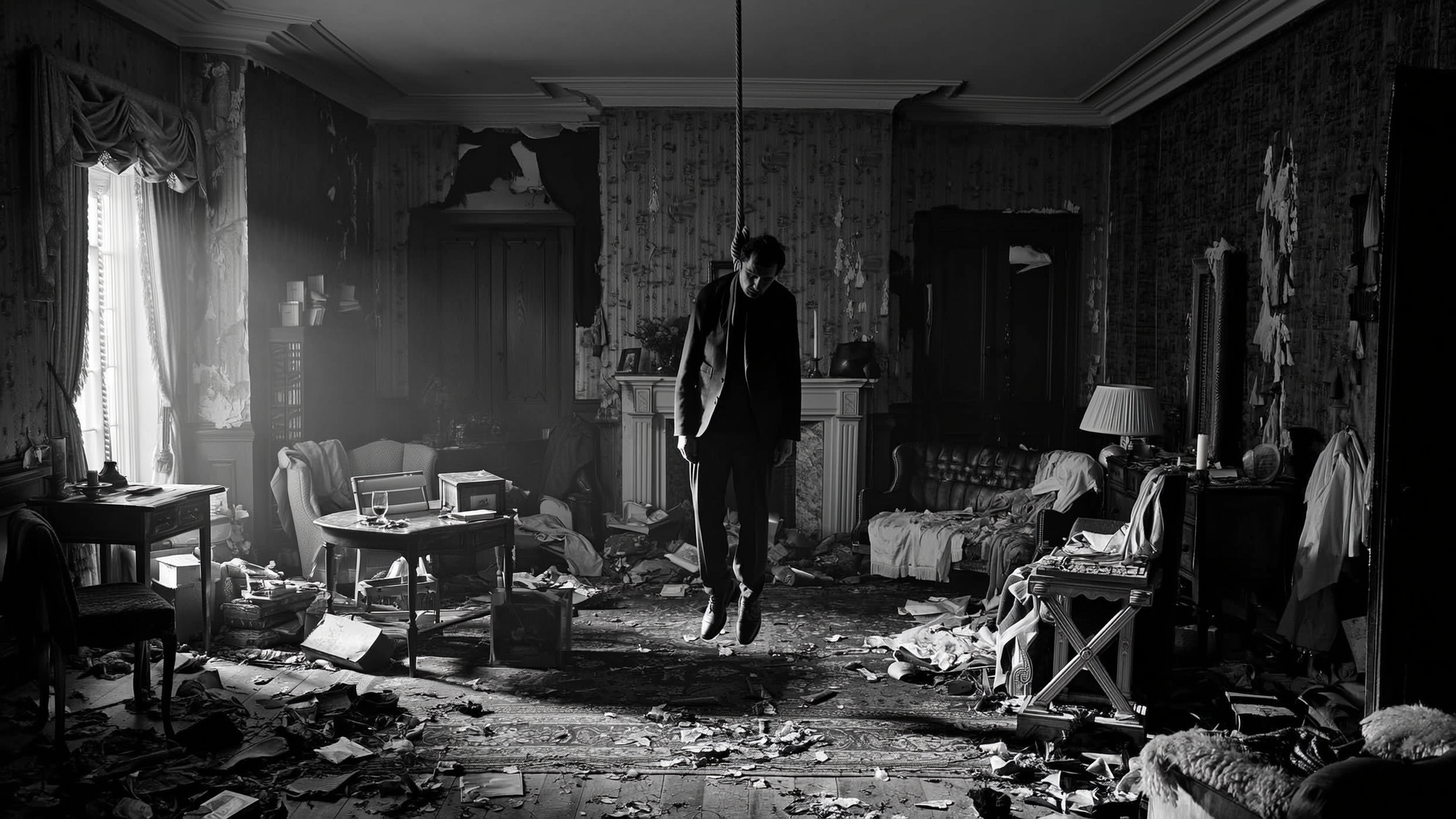

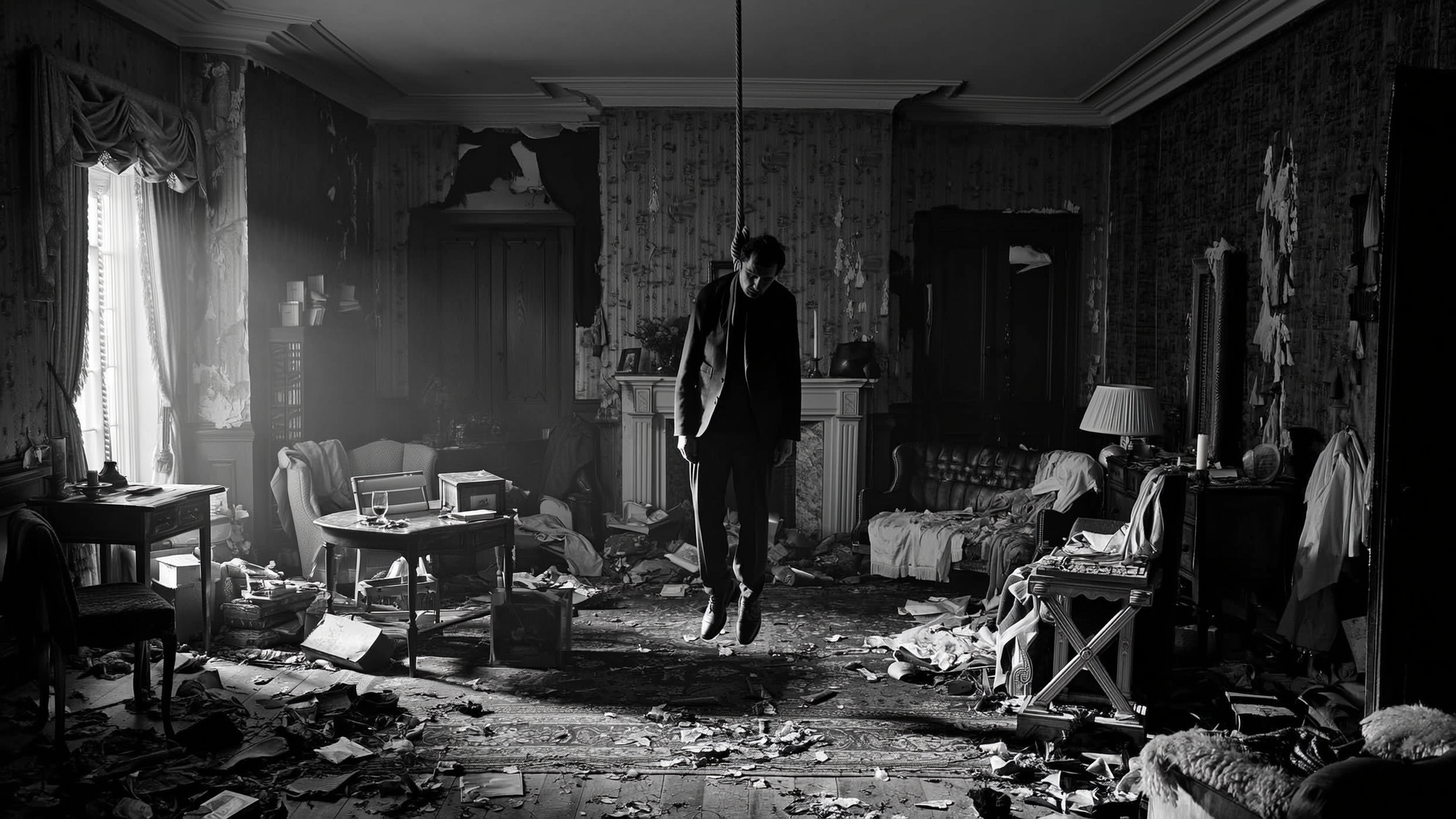

Sent it to Seedream 5.0-lite via Evolink: shot1_door_closed.png + mockup as two refs. 37 seconds, got shot1_door_with_foot.png (first version):

✅ Legs hanging on the left, torso off-frame.

✅ Door on the right, closed, waiting.

✅ Wallpaper, drawer chest, parquet — all in place.

✅ Boots and pants dark, like the suit in shot1.

The Hitchcock device works — viewer knows what's in the room, the door becomes ominous.

"Nope, crap, the boots should be from a slightly different angle."

body_ref.png was shot head-on, but our door shot is at 45° from the left. Legs need to be at ¾, not straight. Added "three-quarter angle, not head-on" to the prompt — Seedream predictably ignored: it can't do 3D rotation from text, it copies orientation from the ref.

First run with the "three-quarter angle" instruction → shot1_door_with_foot_v2.png: legs stayed frontal (Seedream can't do 3D rotation from text):

Need a new pose-ref — "hanging body at the right angle". Text-to-image, no refs:

body_ref_34angle.png — side-back profile, body facing right (toward the door). "Wrong direction" — said the user. Mirroring in PIL, free.body_ref_34front.png — attempt at "front-¾". Toes pointing right — still wrong.body_ref_34front_v2.png — added explicit "STRICTLY VERTICAL — torso, arms, legs hang straight down under gravity as dead weight. Only the head is slumped forward at the neck". Worked: body vertical, head slumped, ¾ rotation toward camera.User:

"Why flip? Without the flip toes already point left."

Right. Use as-is. Crop legs, build mockup, send — and get shot1_door_with_foot_v3 / _v4. But again frontal legs in the frame, not ¾.

After several iterations where Seedream stubbornly returned the old legs, the user formulated a hypothesis:

"Important point — it's not pulling some abstract frontal legs, it's pulling literally the same ones that were in the first mockup. Theory: it sees the same filename? And gives you the old URL."

Evolink URLs do contain the filename:

https://files.evolink.ai/00551HC8TAUP94NFJS/images/png/shot1_door_with_foot_mockup.png

When shot1_door_with_foot_mockup.png is overwritten locally with new content and uploaded — Evolink dedupes by name and returns the old URL with a cached first version. Seedream gets the very first mockup (with frontal legs), not the fresh one.

Fix in seedream_gen.py — added the first 12 chars of the SHA-256 of the content to the upload name:

hash_prefix = hashlib.sha256(raw).hexdigest()[:12]

filename = f"{path.stem}_{hash_prefix}{path.suffix}"

Re-run — and the legs are actually at ¾ angle, with the right asymmetry (right leg forward, left slightly back). A critical bug found by the user. Every previous iteration, Seedream had been getting the first mockup, no matter how the local file changed.

"Yeah, and you cropped the legs a little too low. And the legs themselves should be a bit further left."

"A bit larger legs, by 20%."

v6: legs larger, on the very left edge of the frame, boots fully in ¾ angle. But a new problem appeared — Seedream sprinkled dirt onto the wallpaper around the legs, interpreting the sharp silhouette edge as "something stained".

First fix attempt: cropped down to the very sole without picking up the gray floor from the pose-ref (v7). Result — "background gone, but so are the boot tips". v8 — middle ground, but the user:

"Not true, toes got cut off, the background didn't."

The agent realized: Seedream is lazy — it pulls everything in the mockup. Gray rectangle around the legs in the collage → gray patch in the output.

Solution — silhouette via brightness mask (dark pixels = legs, light = transparent). Threshold 110 → v9: silhouette on the Stalinka wallpaper, no gray rectangle. But:

"Background not cut (why isn't it cutting?). And the toes got slightly cut too."

Increased the threshold, removed the blur. Mockup looks clean. Sent it — SSL error on Evolink (SSLEOFError), five attempts in a row. Waited a minute, went through.

v10: model sprinkled visual debris around the legs. Explicitly forbade debris, grime, dark smudges in the prompt (v11) — cleaner, but artifacts still there. Tried with a soft mask and edge blur (v12):

✅ Stalinka wallpaper behind the legs without gray patches.

⚠️ Dark spots on the wall look like part of the pattern, but..."Nope, dirt around the legs, just blurred now."

And the fundamental conclusion:

pip install rembg — alpha matting library on U2Net. 30 seconds — 176 MB model downloaded, installed. Rewrote the mockup assembly: instead of a brightness threshold — remove(legs_crop) (RGBA with clean alpha), paste with alpha mask.

v13: wallpaper around the legs clean — no halo, no dirt. Legs hanging on the left, boots in ¾, door on the right, M1 location preserved.

"OK, fine, accept."

Second approved frame. Key lesson: for composition mockups — strictly U2Net segmentation, threshold masks don't work.

The user immediately pivoted to video:

"And then on top of this frame let's generate a Seedance video: first a couple of seconds the legs sway, then the door is kicked in and the bandit walks in (we have a character card for him)."

Wrote seedance_video.py for the /v1/videos/generations endpoint, model seedance-2.0-reference-to-video, up to 9 refs + prompt.

v1: 5s, 720p, 21:9. Refs: shot1_door_with_foot.png + char_bandit.jpg. Without @image tags — agent missed the convention from the guide.

v2: same run, but with explicit tags — @image1 as first frame, @image2 as character. Per the Seedance guide, ref roles are marked in the prompt text.

"Came out pretty good. Now we need a version with timing."

The user shared a format example from MindStudio:

Global style: cinematic, 4K, cold colors.

[0s–4s]: Wide shot, static.

[4s–7s]: Medium shot, slow push in.

[7s–10s]: Close-up.

And the brief: 10 seconds, 3 seconds before the door is kicked, then everything as before, the gaze longer.

v3 with timecodes. 458 sec generation, 3.2 MB.

"In the 10s video the delay before the start didn't work, alas. And the kick is worse than in the short one. v2 still the best."

The agent rewrote per the guide: prefix Style:/Duration:/Camera:, one camera directive (static locked-off), explicit ref roles, timecodes in [MM:SS-MM:SS] format, negative prompts at the end.

v4, 10 sec, 346 sec generation, 3.4 MB:

Style: cinematic black and white 35mm film, grainy, deep blacks, high contrast.

Duration: 10s.

Camera: fixed, static locked-off wide shot throughout.

@Image1 as first frame and background environment.

@Image2 as character reference.

[00:00-00:03] dead silence, hanging legs sway slightly.

[00:03-00:05] door kicked open, slamming inward.

[00:05-00:10] bandit stands frozen, breathing heavily.

Avoid jitter. Avoid bent limbs. Avoid identity drift. Avoid camera movement.

User:

"3-sec pause is there, but the door isn't kicked sharply enough and the bandit walks in too quickly afterwards. And he's holding a gun — that's unwanted."

Edit: collapsed the door kick to 1 second ([00:03-00:04]), gave 3 seconds for the silhouette in the doorway before the first step, explicitly in the prompt and in the character ref: "hands empty, no weapons, no gun".

Run → HTTP 402 insufficient_quota. One 10-sec request costs 135 Evolink credits, 30 left.

"Options: top up Evolink; WaveSpeed ($1 trial, probably spent); fast mode (

seedance-2.0-fast-reference-to-video). Until you top up, I'm not running anything. The v5 prompt is in the chat above, say when — I'll continue."

In an hour and a half:

- shot1_door_with_foot.png — approved as canon, via rembg + composition mockup.

- Found the Evolink CDN cache bug: dedupe by filename → new content doesn't reach the model. Fix: SHA-256 prefix in the name.

- rembg / U2Net entered the toolkit — for clean segmentation instead of threshold masks.

- Wrote seedance_video.py — reference-to-video pipeline, up to 9 refs.

- Derived the Seedance prompt form from the guide: Style/Duration/Camera + explicit @image roles + timecodes + negatives.

- v2 (5 sec, no timecodes) — benchmark. v4 (10 sec, per guide) gives a 3-sec pause but a softer kick.

- Day stopped on insufficient_quota, v5 prompt waiting for top-up.