The conversation kicked off at 06:31 — the agent suggested laying out the shots in a grid, separating the recurring visual anchors of the location from the unique ones. The user didn't quite get the questions and at 06:32 sent over пиньята.txt:

"Hold on, I don't really get your questions. Do you have the full Piñata text? If not — here it is. What's the first step?"

The encoding was botched but the agent figured it out. The screenplay split into three physical spaces: hideout-BW (scenes 1, 2 before the corpse rips open, and 4 after the slap), hideout-color (scene 2 after the rip, before the slap), and village-trip (scene 3). Four scenes in the script.

Proposal: forget shots and action, start with one task — a picture of the HIDEOUT, the visual anchor for every shot in it.

The user clarified:

"So we start with the master shot? Like a wide angle? And what if I need a reverse angle — and how do I edit a master shot?"

The agent explained: a master shot is a visual identity anchor, not a wide establishing. It locks the wallpaper color, the lighting character, the key objects, the texture grit. Then the question: is the corpse part of the location or a separate entity? Answer — a Character. The corpse has states: hanging → swinging → torn open → remains; visual identity (clothes, age, decomposition) must stay consistent across scenes 1, 2, and 4.

The user asked about local Flux. He has a 7900 XTX, 24 GB VRAM — the hardware can run Flux.1 Kontext dev in FP16. But old Windows, no ROCm.

"ROCm isn't there and won't be, old Windows."

The agent ran through the options: ZLUDA (CUDA emulation, lottery), DirectML (slow). The user came back with a key argument:

"Why does llama.cpp work through Vulkan, what — you can't do images too?"

Turns out — you can. stable-diffusion.cpp from the same author (Gerganov), Vulkan backend without ROCm, support for Flux.1 Kontext dev since late 2024, GGUF Q4/Q8. Four days ago (April 11) they baked an embedded web UI into the binary (PR #1408).

Concrete links: binary sd-master-fd35047-bin-win-vulkan-x64.zip (23 MB) + weights flux1-kontext-dev-Q8_0.gguf (12.7 GB) + ae.safetensors + clip_l + t5-v1_1-xxl-encoder-Q8_0.gguf. Total ~20 GB.

At 07:31 the user reported: everything downloaded into c:\Users\boomyjee\Downloads\sdcpp\. The agent rewrote start.bat, created server.bat. First run — OOM on VAE encode: ref.png was 2848×1600, allocating a 3.5 GB buffer with only 6.7 GB free out of 24 GB. Fixed memory options.

It started. The numbers:

- Loading 4 models: 5 sec (~17 GB into VRAM)

- VAE encode ref: 7 sec

- CLIP+T5 encode prompt: 2 sec

- Sampling 20 steps: 180 sec (9 sec/step) — expected 2–3 sec/step

- VAE decode: 12 sec

- Total: 3:20 per generation

Second attempt gave the same speed. Flash Attention flags for the Vulkan backend silently no-op — not implemented. Verdict: locally on AMD via Vulkan Flux technically works, but 200 sec per edit is unacceptable for iteration.

The user asked about OpenRouter. They have FLUX.2 there (November 2025, newer than Kontext v1):

- flux.2-pro — $0.015/MP input + $0.03/MP output → ~4.5¢ per edit on 1 MP

- flux.2-flex — $0.06/MP → 12¢, but multi-reference editing

- flux.2-max — top tier

- flux.2-klein — cheap

"Let's just keep moving with one-off scripts, we're testing the concept. Generate a master plan and we'll look at it."

At 08:02 the first flux_gen.py was written, M1 generation kicked off — master shot of the hideout, B&W, view from the door. First run died with HTTP 400 "Request Moderated" — Flux hard-censors "noose/corpse/criminal". Rewrote the prompt without triggers: "abandoned old Soviet apartment, hanging lightbulb, crumpled newspapers, dust motes" — passed.

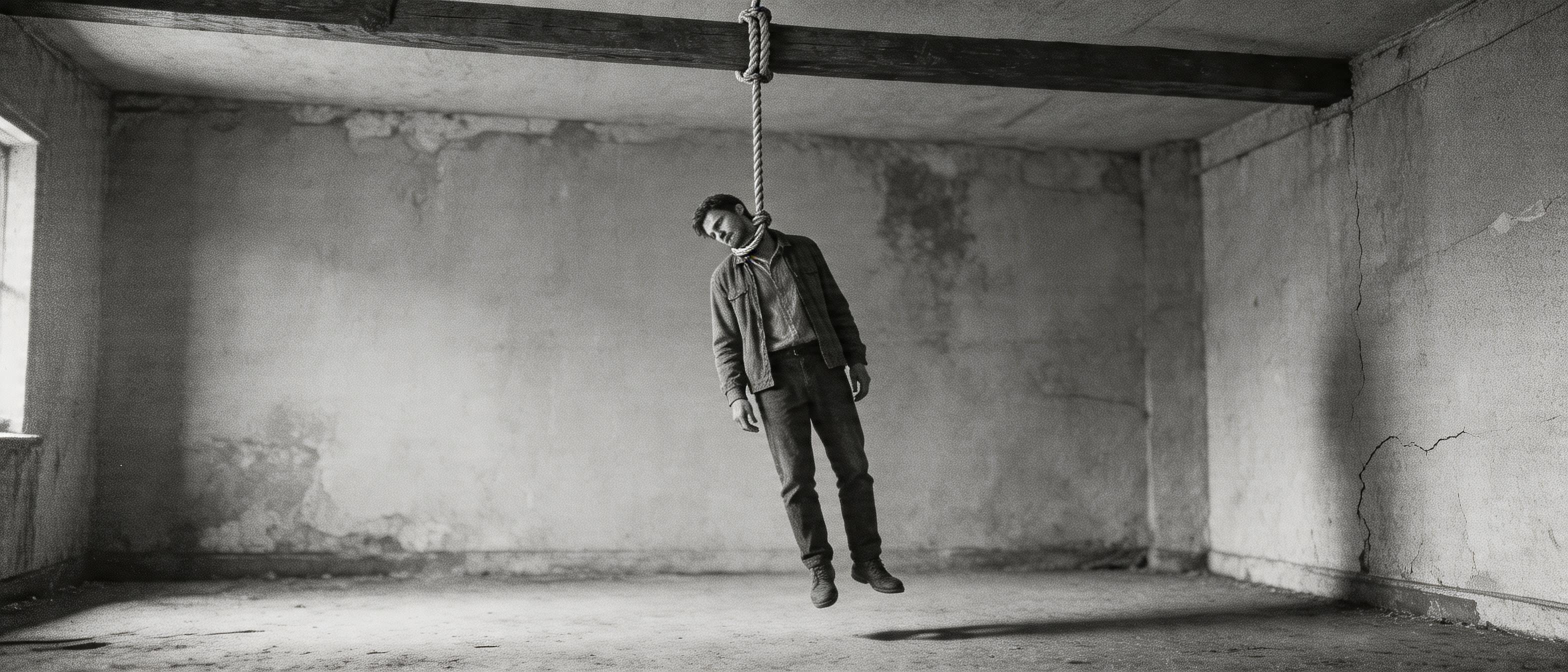

First image m1_priton.png — 12 seconds, 3 cents:

Nice atmosphere, but in the user's view — too cramped:

"From my angle it's tight in here, we need space and depth."

Reworked for cinemascope 2.39:1, doorways in the background:

The user cut in:

"Why the hell so many doors and why is the picture square? And why is the resolution so low?"

Flux's docs read worse than they could: turns out image_config: {aspect_ratio: "21:9"} goes in the payload, not in words in the prompt. Same for image_size. Fixed flux_gen.py — --aspect 21:9 --size 2048:

"Too much wreckage, nowhere to look for the goods. And we need just one door."

v4 stripped the wreckage and the unnecessary doorways, kept one door on the right, added a wardrobe, drawers, table, chair — "something to search":

"The ceilings look low to me."

v5 added "4-meter ceilings, crown molding, Stalinka-era apartment, herringbone parquet, floor-to-ceiling window":

Now it's a Stalinka. Size though, 1920×816 ≈ 1.57 MP — same result with --size 3072:

OpenRouter caps 21:9 output to ~2 MP across both models. Tried the top-tier flux.2-max — $0.10, 42 sec, got m1_priton_v7_max.png: 2048×880, 1.80 MP. Slightly sharper and more contrasty. Still not 4K, but it's the canonical M1:

"Let's start a decisions file. Flux-max is fine, we keep it. What's next?"

Created docs/movie_pipeline.md — to lock decisions and open questions.

The agent formulated the main untested hypothesis: multi-ref edit preserves location identity. If it doesn't, the whole "M1 + Character → shot" architecture falls apart.

At 08:29 the user sent over char_bandit.jpg, char_bandit_2.png, char_body.png, char_wife.png — character sheets from Seedance-2-character. Each sheet has 8 views and expressions of the character. The idea: feed the sheet as a ref into multi-ref edit.

First test on flux.2-flex (multi-ref): M1 + char_body → man hanging in a noose over the table.

Location preserved one-to-one — same wallpaper, herringbone parquet, wardrobe, window, molding. The character reads. But the model didn't hang him — it sat him on the couch. The user caught it instantly:

"No, this is nonsense, like every pose of the character is a new character? Are you serious?"

Right, this was a dead end: the agent was trying to bolt architecture-crutches around Flux's safety filter. The right move — don't bend the model to your architecture, pick a different model for the unstitchable shot.

The user reminded: character sheets are made by seedance-2-character from generator.py — only MUAPI has them. And for composition you need a compositor. An Evolink key turned up in the config. Wrote seedream_gen.py for the /v1/images/generations endpoint, model doubao-seedream-5.0-lite.

Same test: M1 + char_body on Seedream:

Location 1:1, character reads, model actually did hang him, but the feet are standing on the table. The agent in his euphoria called this a win. The user sobered him up:

"Are you serious? The dude is just standing on the table and the face doesn't read."

And right after — the key question:

"What makes you think it's censorship and not just a bad prompt?"

Re-read the prompt. It said "feet dangling several centimeters above the floor" — the model did exactly that: feet a few centimeters above the table (~50 cm of height). Rewrote: "FULLY SUSPENDED IN MID-AIR... clear empty gap of 30 centimeters":

Standing vertically on the table, with the rope a decoration above his head.

The user demanded proof of the censorship claim:

"You haven't proven the censorship part. Try Flux, Seedream, Banana — generate a hanging man without any refs at all and we'll find out if there's censorship."

Wrote banana_gen.py (Gemini Image via OpenRouter). Same prompt, three models in parallel:

| Model | Response |

|---|---|

| Flux 2 Pro | HTTP 400 "Request Moderated" — hard reject |

| Nano Banana (Gemini 3.1) | text refusal — "I can't create an image of a man hanging" |

| Seedream 5.0-lite | HTTP 200, perfect — hanging, feet in the air, head sideways |

The "Seedream censors" hypothesis collapsed. No censorship in Seedream, the problem is in multi-ref — when a character sheet is added, the model somehow "dampens" complex actions.

Meanwhile the user cut char_body_front.png and char_body_face.png — separate front and face instead of the full 4096×1737 sheet, where each face becomes ~50×50 pixels after resampling and you can't pull anything out.

Three multi-ref variants in parallel:

| Variant | Refs | Hanging? |

|---|---|---|

| V1 | M1 + face crop | ✅ yes, actually in the air |

| V2 | M1 + face + ready hanging body | ⚠️ half-hanging, foot on the table |

| V3 | M1 + face + front + action-first prompt | ❌ standing on the table |

V1 won. Hypothesis confirmed: char_body_front.png (alive, standing man) actively prevents the model from making a "dead hanging" body. Conclusion: feed face crop for identity, describe pose in words or with a separate pose-ref.

"Only the hanging guy needs to be much closer to the camera."

The agent started turning the "medium close-up" knob in the prompt — the user corrected immediately:

"No, not like that. Keep the background 1:1, move the body physically closer — then it'll be larger."

Don't change the camera, change where the body hangs. Hang it closer to the camera — into the foreground:

Body large, hanging right. But the user:

"Nah, total crap. Try other models."

banana_gen.py was rewritten into a generic OpenRouter-gen (any model via --model). Ran Riverflow v2 Pro, Gemini 3 Pro Image, Seedream 4.5 in parallel:

IMAGE_SAFETY (refusal)Compressed refs to 1280px. Tried without "foreground" — Seedream 4.5 gave a vertically hanging body. The agent called this "the best yet." The user immediately:

"But Seedream changed the room to a different one. And how were you calling GPT-5?"

GPT-5 Image via the same OpenRouter died with unsupported_country_region_territory. The user has a proxy in config, ran it through that — GPT-5 made it (200, 51 sec), but returned safety_violation. Model written off.

"I dropped body_ref in for you, the body is right there."

The user found body_ref.png — a real ref of the hanging-man pose (probably from Higgsfield). New plan: M1 + body_ref + face crop.

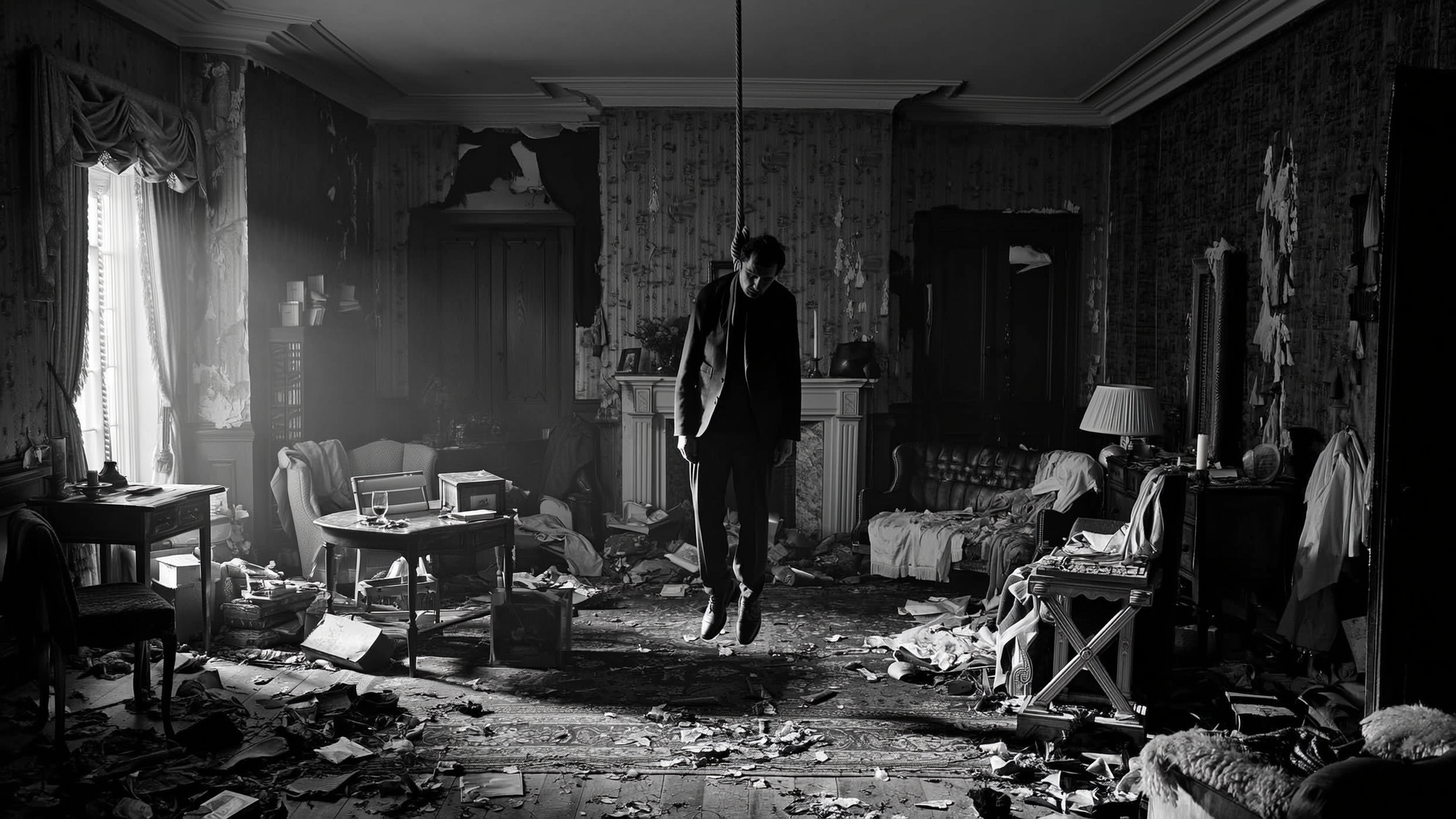

✅ Location 1:1 with M1. ✅ Body hanging vertically, head slumped. ✅ The suit. body_ref provided the pose physics, M1 the location, face crop the identity. Each ref played its role.

"Not approving yet. Need the body closer. And I want to test Higgsfield Soul."

Attempts at "body fills 70% of frame", 1.5m from camera — test_composite_close.png:

And an attempt at 16:9 instead of 21:9 — the body looks larger at the same physics:

Higgsfield has no pay-as-you-go. The user provided a WaveSpeed key ($1 free). Wrote wavespeed_gen.py for POST → task_id → polling. Launched Higgsfield Soul on M1 — the task got stuck in queue for 12+ minutes with executionTime=0ms, cold start.

In parallel — brute-force across endpoints (bytedance/*, kwaivgi/*, alibaba/*, higgsfield/*). Found working ones: bytedance/seedream-v4/edit, bytedance/seedream-v4.5, alibaba/wan-2.7/image-edit. Kling didn't surface anywhere.

Seedream v4.5 Edit with three refs (M1 + body_ref + face):

Physics clear — hanging with a gap, head slumped. Location close to M1, though the furniture differs slightly. Infrastructure note: direct download from d2p7pge43lyniu.cloudfront.net is blocked from RU, downloaded through the proxy.

Tried Wan 2.7 Edit — head almost upright (not limp), body small, face blurred.

Nano Banana Pro (google/nano-banana-pro/edit) on WaveSpeed — same Google censorship.

The agent rolled out a ranking across 4 models. The user:

"I'm lost — which picture is the original, what am I comparing to, what got closer?"

And further, when the agent flubbed comparing body sizes:

"In 45edit the body is smaller than in 5lite_closer, and you're saying the opposite."

And harder:

"I don't get whether you tested the new models on edit or fresh generation. What were you putting in? You're drawing a pile of conclusions and they're wrong. The problem isn't the models, it's what you're feeding in."

And the final one:

"Look, m1_priton_v7_max — that's the only image we approved. After that you generated a pile of versions and the problem is you fed the new versions on top of old versions, then drew conclusions about the models. We need to roll back to 'there is only the room' state."

"What does 'will keep the location' mean? Can you write in a format — what exactly are you putting on the input? Room + short prompt. Or room + face. Or room + face + body. Or room + face + hanged-man ref. There are a million variations. How am I supposed to understand what you're doing?"

After this — a formal format for every test: Input: X + Y → Model: Z → What we learn: A.

Test #1: M1 + the prompt "in this room a corpse is hanging", nothing else. Seedream 5.0-lite via Evolink. Learning: does it hold the location without refs?

Location held, but the physics is dubious — body vertical, feet at table level, can't tell if hanging or standing.

"Why is the body so far from the camera again? Maybe there's a method — you literally draw it a ref where it's needed and at what size?"

The agent suggested Qwen Image Edit Plus via Evolink ($0.022, same endpoint as Seedream): mask + prompt, white area = "the body goes here". Pixel-perfect everything else. Generated the mask — vertical 307×660 ellipse in M1's center (fill zone).

The user formulated the day's main idea:

"Why can't I upload an image-with-mask to the same Seedream? Like — here's the room, here's it with a mask — at the mask spot, the body."

That's a composition mockup: by hand, you crop body_ref, paste it into M1 at the right place and size (like a photomontage), Seedream works with the ready layout — all it has to do is make the seam invisible. Composition control is on you, not on the model.

First mockup: body_ref → crop 409×1152 → resize to 234×660 → paste at (907, 44) on M1. The user immediately:

"Are you serious? In the ref the body is half the frame, on your collage it's even smaller."

Recounted: in body_ref the body is only the lower 60–70% of the picture, so at full height it's 430px = 50% of M1's frame, not 75%. Re-cropped tightly "neck-to-boots", resized to 75% height. The seam stays — that's a feature, not a bug: it tells Seedream "body goes here, this size".

Run: M1 + composite_mockup → Seedream 5.0-lite. Prompt: "Render as one cinematic photo, blend the body seamlessly, preserve the room from reference 1".

✅ Body 75% of frame height, really dominates. ✅ Hanging correctly — feet in the air with a gap, head slumped. ✅ M1 location preserved 1:1. ✅ The mockup seam is gone — model blended the boundaries cleanly. One minus: the face isn't visible (head fully slumped, only the crown), but that's a consequence of the body_ref pose, not a pipeline flaw.

"Yeah, accept."

First approved composition. Added a "Registry of approved artifacts" section to docs/movie_pipeline.md — exact input, prompt, parameters for M1 and Shot 1, plus a Python block for assembling the mockup (crop coordinates, scale, paste point). Every next shot is now done from this template.

"I don't think this belongs in git, it's experiments. Generate a shot of the right door that the bandit will kick in."

Along the way the user raised an important question:

"Do we actually need to generate all these frames as images before we generate the video? Aren't we making things worse for the video model when we feed it frames? Maybe we should start with a master video? When the model isn't anchored to a frame, only to style, it samples from a thicker space of weights."

Technically correct intuition: diffusion without image-conditioning samples from its native distribution. A foreign frame as anchor wastes capacity on reconciling with the style. Video-first was deferred, made the door shot.

First attempt: agent started cropping the bandit for a mockup. The user cut in:

"What bandit, we just need a closed door."

New approach — tighter framing of the same location. Second important note:

"Did you write 45 degrees? If it's straight on, and he later looks at the corpse — gaze into camera, the 180° rule breaks."

Operator's logic. And immediately after — the cardinal rule of minimal prompting:

"Are you sure you need to describe the room again? You can just say: 'give me a tighter shot of the door on the left of the reference'. You're writing 'same wallpaper, same floor, etc.'"

Right. If M1 is in the ref, the model already sees the wallpaper/parquet/window — re-describing is redundant or worse (if the words don't match the ref — the model picks). The minimal prompt describes only what changes (camera framing), the constants are given by the ref.

The prompt collapsed to two phrases:

"Same room as in the reference image, but tighter framing on the closed wooden door. Camera at 45-degree angle. Same style. Empty room, no people."

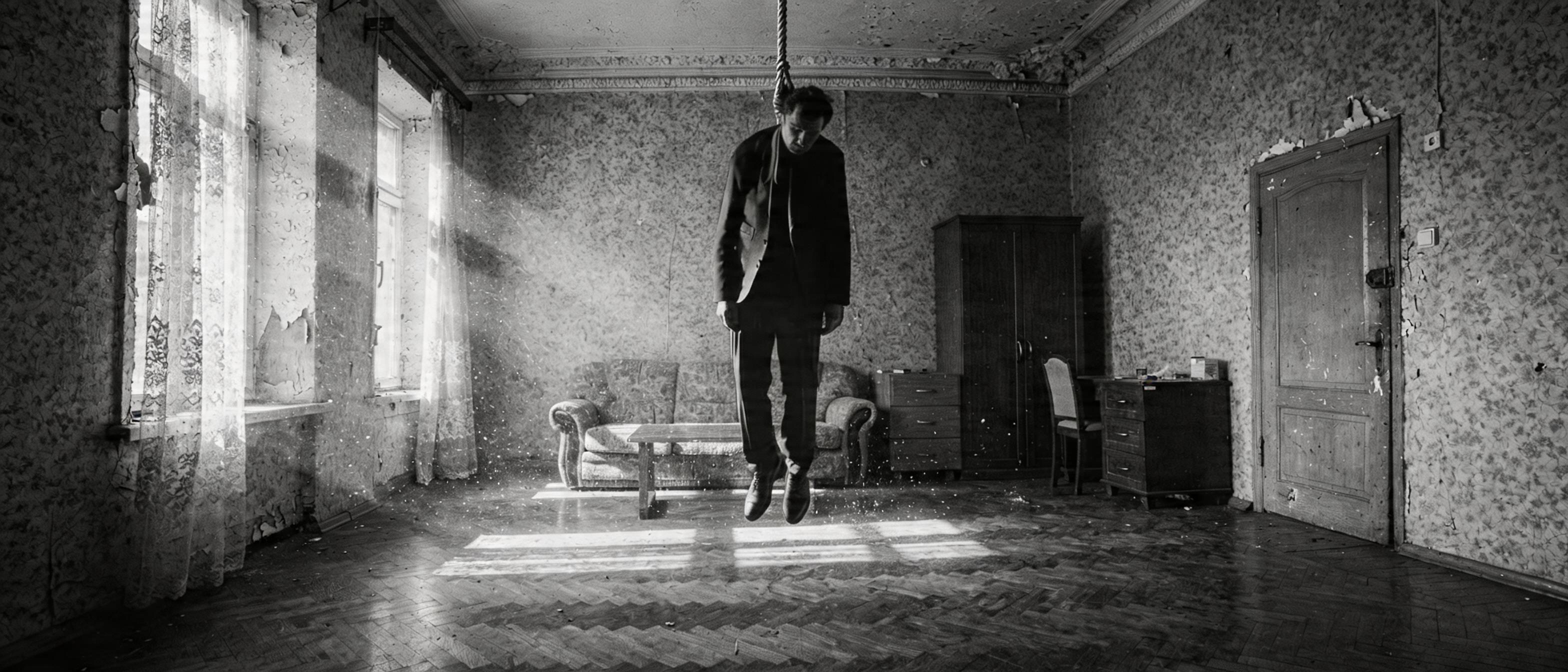

✅ Location recognizable: same wallpaper, door, drawer chest, chair, edge of the wardrobe, parquet. ✅ Door in the center, waiting. ✅ Minimal prompt worked — the model took everything from the ref.

"Came out fine, but not approving. At this framing on the left there'd be the corpse. Maybe not the corpse — a leg or a boot."

A Hitchcock move — the rhyme "the door waits, but a boot is already hanging in the frame." The viewer catches "something terrible is in there" before the bandit barges in. By this point — 11:46, the session approaches its first pause. Plan for the next: assemble a mockup from body_ref (lower body) + shot1_door_closed, send to Seedream, dial in via rembg if the background is messy.

In five hours:

- the stack was picked: Flux 2 Max for location master shots (via OpenRouter), Seedream 5.0-lite for shots with multi-ref (via Evolink), body_ref + face crop as two separate anchors

- two canonical artifacts approved: m1_priton_v7_max.png (master shot of the hideout) and shot1_pinata_corpse.png (corpse in noose)

- the main composition tool was found: layout mockup — by hand, we assemble a collage M1 + body_ref at the right position and size, Seedream polishes the seam

- the minimal-prompt rule was formulated: describe only what changes, constants come from the ref

- censorship limits locked in (Flux, Gemini, GPT-5 don't draw hangings; Seedream without refs — does)

- infrastructure quirks: OpenRouter caps 21:9 to ~2 MP, WaveSpeed cloudfront blocked from RU (proxy mandatory), Higgsfield requires registration